21. Linear State Space Models#

Contents

“We may regard the present state of the universe as the effect of its past and the cause of its future” – Marquis de Laplace

In addition to what’s in Anaconda, this lecture will need the following libraries:

!pip install quantecon

Show code cell output

Requirement already satisfied: quantecon in /home/runner/miniconda3/envs/quantecon/lib/python3.12/site-packages (0.8.1)

Requirement already satisfied: numba>=0.49.0 in /home/runner/miniconda3/envs/quantecon/lib/python3.12/site-packages (from quantecon) (0.60.0)

Requirement already satisfied: numpy>=1.17.0 in /home/runner/miniconda3/envs/quantecon/lib/python3.12/site-packages (from quantecon) (1.26.4)

Requirement already satisfied: requests in /home/runner/miniconda3/envs/quantecon/lib/python3.12/site-packages (from quantecon) (2.32.3)

Requirement already satisfied: scipy>=1.5.0 in /home/runner/miniconda3/envs/quantecon/lib/python3.12/site-packages (from quantecon) (1.13.1)

Requirement already satisfied: sympy in /home/runner/miniconda3/envs/quantecon/lib/python3.12/site-packages (from quantecon) (1.14.0)

Requirement already satisfied: llvmlite<0.44,>=0.43.0dev0 in /home/runner/miniconda3/envs/quantecon/lib/python3.12/site-packages (from numba>=0.49.0->quantecon) (0.43.0)

Requirement already satisfied: charset-normalizer<4,>=2 in /home/runner/miniconda3/envs/quantecon/lib/python3.12/site-packages (from requests->quantecon) (3.3.2)

Requirement already satisfied: idna<4,>=2.5 in /home/runner/miniconda3/envs/quantecon/lib/python3.12/site-packages (from requests->quantecon) (3.7)

Requirement already satisfied: urllib3<3,>=1.21.1 in /home/runner/miniconda3/envs/quantecon/lib/python3.12/site-packages (from requests->quantecon) (2.2.3)

Requirement already satisfied: certifi>=2017.4.17 in /home/runner/miniconda3/envs/quantecon/lib/python3.12/site-packages (from requests->quantecon) (2024.8.30)

Requirement already satisfied: mpmath<1.4,>=1.1.0 in /home/runner/miniconda3/envs/quantecon/lib/python3.12/site-packages (from sympy->quantecon) (1.3.0)

21.1. Overview#

This lecture introduces the linear state space dynamic system.

The linear state space system is a generalization of the scalar AR(1) process we studied before.

This model is a workhorse that carries a powerful theory of prediction.

Its many applications include:

representing dynamics of higher-order linear systems

predicting the position of a system

predicting a geometric sum of future values of a variable like

non-financial income

dividends on a stock

the money supply

a government deficit or surplus, etc.

key ingredient of useful models

Friedman’s permanent income model of consumption smoothing.

Barro’s model of smoothing total tax collections.

Rational expectations version of Cagan’s model of hyperinflation.

Sargent and Wallace’s “unpleasant monetarist arithmetic,” etc.

Let’s start with some imports:

import matplotlib.pyplot as plt

import numpy as np

from quantecon import LinearStateSpace

from scipy.stats import norm

import random

21.2. The Linear State Space Model#

The objects in play are:

An

An IID sequence of

A

An

An

A

Here is the linear state-space system

21.2.1. Primitives#

The primitives of the model are

the matrices

shock distribution, which we have specialized to

the distribution of the initial condition

Given

Even without these draws, the primitives 1–3 pin down the probability distributions of

Later we’ll see how to compute these distributions and their moments.

21.2.1.1. Martingale Difference Shocks#

We’ve made the common assumption that the shocks are independent standardized normal vectors.

But some of what we say will be valid under the assumption that

A martingale difference sequence is a sequence that is zero mean when conditioned on past information.

In the present case, since

This is a weaker condition than that

21.2.2. Examples#

By appropriate choice of the primitives, a variety of dynamics can be represented in terms of the linear state space model.

The following examples help to highlight this point.

They also illustrate the wise dictum finding the state is an art.

21.2.2.1. Second-order Difference Equation#

Let

To map (21.2) into our state space system (21.1), we set

You can confirm that under these definitions, (21.1) and (21.2) agree.

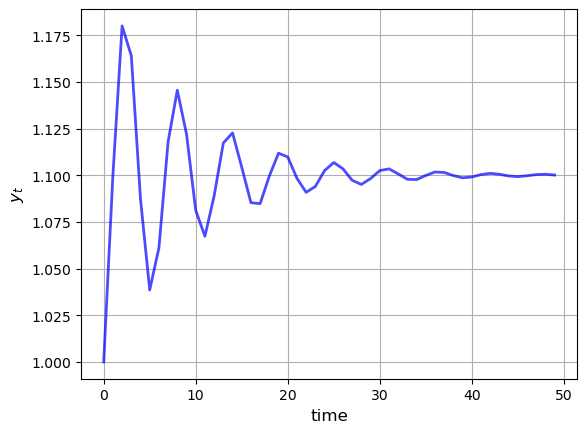

The next figure shows the dynamics of this process when

def plot_lss(A,

C,

G,

n=3,

ts_length=50):

ar = LinearStateSpace(A, C, G, mu_0=np.ones(n))

x, y = ar.simulate(ts_length)

fig, ax = plt.subplots()

y = y.flatten()

ax.plot(y, 'b-', lw=2, alpha=0.7)

ax.grid()

ax.set_xlabel('time', fontsize=12)

ax.set_ylabel('$y_t$', fontsize=12)

plt.show()

ϕ_0, ϕ_1, ϕ_2 = 1.1, 0.8, -0.8

A = [[1, 0, 0 ],

[ϕ_0, ϕ_1, ϕ_2],

[0, 1, 0 ]]

C = np.zeros((3, 1))

G = [0, 1, 0]

plot_lss(A, C, G)

Later you’ll be asked to recreate this figure.

21.2.2.2. Univariate Autoregressive Processes#

We can use (21.1) to represent the model

where

To put this in the linear state space format we take

The matrix

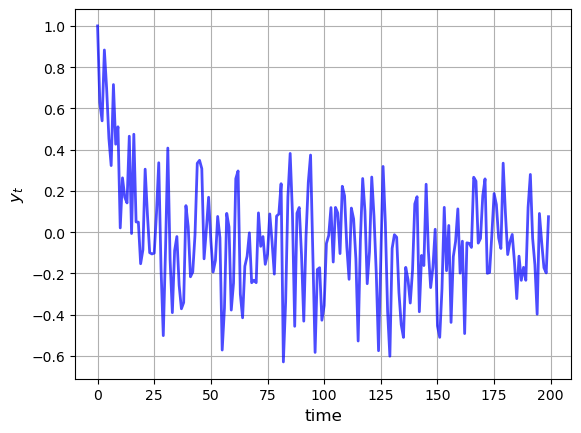

The next figure shows the dynamics of this process when

ϕ_1, ϕ_2, ϕ_3, ϕ_4 = 0.5, -0.2, 0, 0.5

σ = 0.2

A_1 = [[ϕ_1, ϕ_2, ϕ_3, ϕ_4],

[1, 0, 0, 0 ],

[0, 1, 0, 0 ],

[0, 0, 1, 0 ]]

C_1 = [[σ],

[0],

[0],

[0]]

G_1 = [1, 0, 0, 0]

plot_lss(A_1, C_1, G_1, n=4, ts_length=200)

21.2.2.3. Vector Autoregressions#

Now suppose that

Then (21.3) is termed a vector autoregression.

To map this into (21.1), we set

where

21.2.2.4. Seasonals#

We can use (21.1) to represent

the deterministic seasonal

the indeterministic seasonal

In fact, both are special cases of (21.3).

With the deterministic seasonal, the transition matrix becomes

It is easy to check that

Such an

The indeterministic seasonal produces recurrent, but aperiodic, seasonal fluctuations.

21.2.2.5. Time Trends#

The model

We can represent this model in the linear state space form by taking

and starting at initial condition

In fact, it’s possible to use the state-space system to represent polynomial trends of any order.

For instance, we can represent the model

and starting at initial condition

It follows that

Then

21.2.3. Moving Average Representations#

A nonrecursive expression for

Representation (21.5) is a moving average representation.

It expresses

current and past values of the process

the initial condition

As an example of a moving average representation, let the model be

You will be able to show that

Substituting into the moving average representation (21.5), we obtain

where

The first term on the right is a cumulated sum of martingale differences and is therefore a martingale.

The second term is a translated linear function of time.

For this reason,

21.3. Distributions and Moments#

21.3.1. Unconditional Moments#

Using (21.1), it’s easy to obtain expressions for the

(unconditional) means of

We’ll explain what unconditional and conditional mean soon.

Letting

Here

The variance-covariance matrix of

Using

As with

As a matter of terminology, we will sometimes call

This is to distinguish

However, you should be aware that these “unconditional” moments do depend on

the initial distribution

21.3.1.1. Moments of the Observables#

Using linearity of expectations again we have

The variance-covariance matrix of

21.3.2. Distributions#

In general, knowing the mean and variance-covariance matrix of a random vector is not quite as good as knowing the full distribution.

However, there are some situations where these moments alone tell us all we need to know.

These are situations in which the mean vector and covariance matrix are all of the parameters that pin down the population distribution.

One such situation is when the vector in question is Gaussian (i.e., normally distributed).

This is the case here, given

our Gaussian assumptions on the primitives

the fact that normality is preserved under linear operations

In fact, it’s well-known that

In particular, given our Gaussian assumptions on the primitives and the

linearity of (21.1) we can see immediately that both

Since

But in fact we’ve already done this, in (21.6) and (21.7).

Letting

By similar reasoning combined with (21.8) and (21.9),

21.3.3. Ensemble Interpretations#

How should we interpret the distributions defined by (21.11)–(21.12)?

Intuitively, the probabilities in a distribution correspond to relative frequencies in a large population drawn from that distribution.

Let’s apply this idea to our setting, focusing on the distribution of

We can generate independent draws of

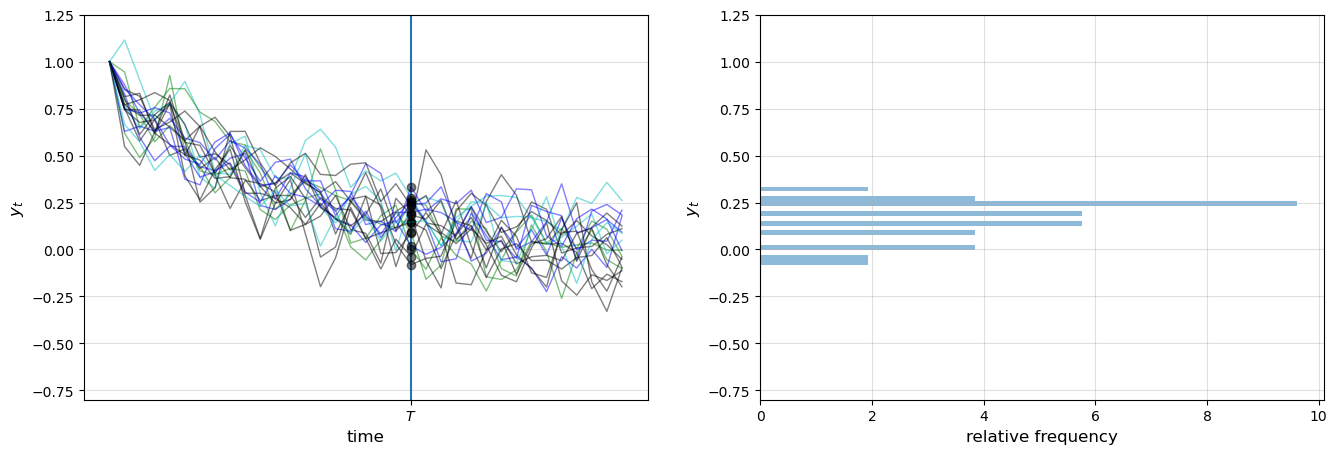

The next figure shows 20 simulations, producing 20 time series for

The system in question is the univariate autoregressive model (21.3).

The values of

def cross_section_plot(A,

C,

G,

T=20, # Set the time

ymin=-0.8,

ymax=1.25,

sample_size = 20, # 20 observations/simulations

n=4): # The number of dimensions for the initial x0

ar = LinearStateSpace(A, C, G, mu_0=np.ones(n))

fig, axes = plt.subplots(1, 2, figsize=(16, 5))

for ax in axes:

ax.grid(alpha=0.4)

ax.set_ylim(ymin, ymax)

ax = axes[0]

ax.set_ylim(ymin, ymax)

ax.set_ylabel('$y_t$', fontsize=12)

ax.set_xlabel('time', fontsize=12)

ax.vlines((T,), -1.5, 1.5)

ax.set_xticks((T,))

ax.set_xticklabels(('$T$',))

sample = []

for i in range(sample_size):

rcolor = random.choice(('c', 'g', 'b', 'k'))

x, y = ar.simulate(ts_length=T+15)

y = y.flatten()

ax.plot(y, color=rcolor, lw=1, alpha=0.5)

ax.plot((T,), (y[T],), 'ko', alpha=0.5)

sample.append(y[T])

y = y.flatten()

axes[1].set_ylim(ymin, ymax)

axes[1].set_ylabel('$y_t$', fontsize=12)

axes[1].set_xlabel('relative frequency', fontsize=12)

axes[1].hist(sample, bins=16, density=True, orientation='horizontal', alpha=0.5)

plt.show()

ϕ_1, ϕ_2, ϕ_3, ϕ_4 = 0.5, -0.2, 0, 0.5

σ = 0.1

A_2 = [[ϕ_1, ϕ_2, ϕ_3, ϕ_4],

[1, 0, 0, 0],

[0, 1, 0, 0],

[0, 0, 1, 0]]

C_2 = [[σ], [0], [0], [0]]

G_2 = [1, 0, 0, 0]

cross_section_plot(A_2, C_2, G_2)

In the right-hand figure, these values are converted into a rotated histogram

that shows relative frequencies from our sample of 20

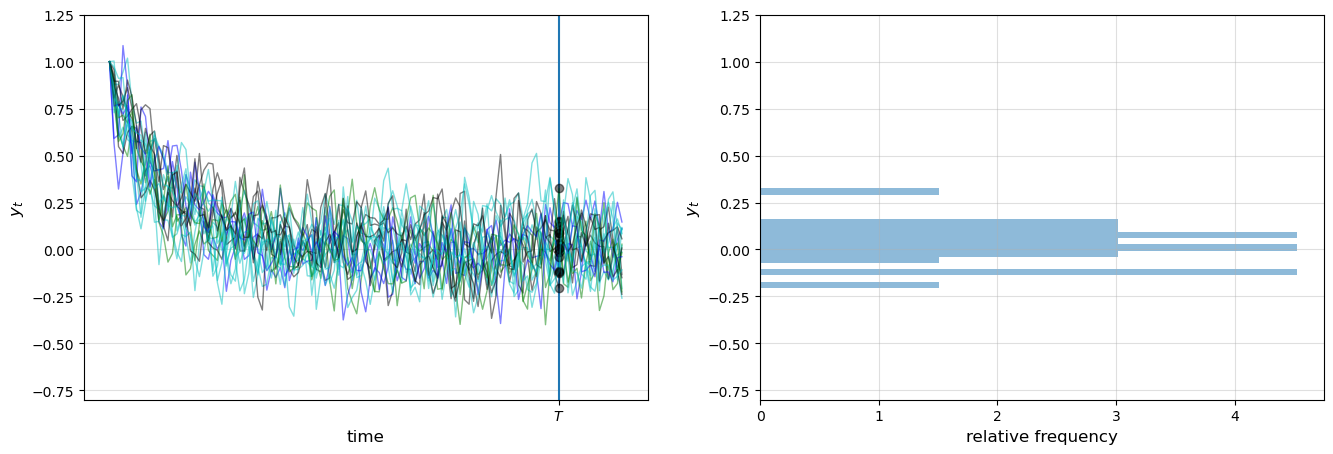

Here is another figure, this time with 100 observations

t = 100

cross_section_plot(A_2, C_2, G_2, T=t)

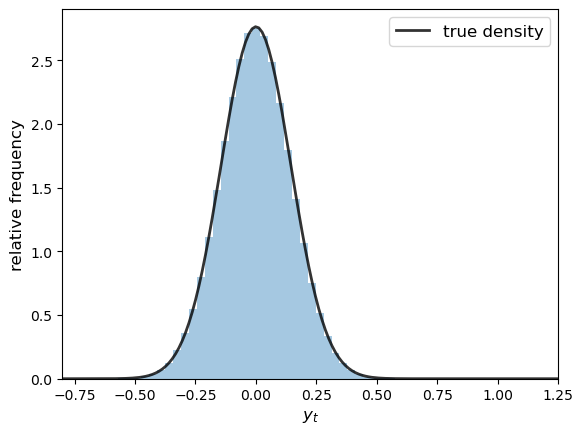

Let’s now try with 500,000 observations, showing only the histogram (without rotation)

T = 100

ymin=-0.8

ymax=1.25

sample_size = 500_000

ar = LinearStateSpace(A_2, C_2, G_2, mu_0=np.ones(4))

fig, ax = plt.subplots()

x, y = ar.simulate(sample_size)

mu_x, mu_y, Sigma_x, Sigma_y, Sigma_yx = ar.stationary_distributions()

f_y = norm(loc=float(mu_y.item()), scale=float(np.sqrt(Sigma_y.item())))

y = y.flatten()

ygrid = np.linspace(ymin, ymax, 150)

ax.hist(y, bins=50, density=True, alpha=0.4)

ax.plot(ygrid, f_y.pdf(ygrid), 'k-', lw=2, alpha=0.8, label='true density')

ax.set_xlim(ymin, ymax)

ax.set_xlabel('$y_t$', fontsize=12)

ax.set_ylabel('relative frequency', fontsize=12)

ax.legend(fontsize=12)

plt.show()

The black line is the population density of

The histogram and population distribution are close, as expected.

By looking at the figures and experimenting with parameters, you will gain a feel for how the population distribution depends on the model primitives listed above, as intermediated by the distribution’s parameters.

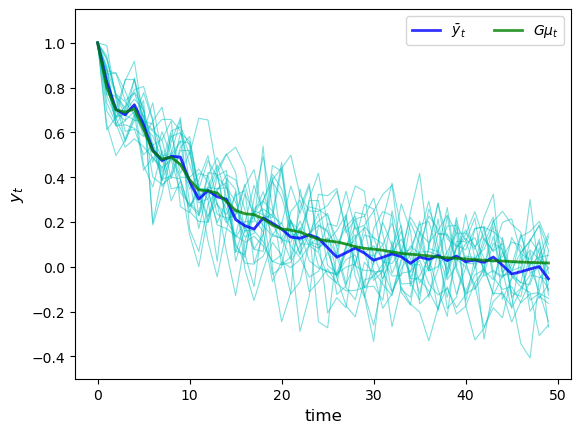

21.3.3.1. Ensemble Means#

In the preceding figure, we approximated the population distribution of

generating

recording each observation

histogramming this sample

Just as the histogram approximates the population distribution, the ensemble or cross-sectional average

approximates the expectation

Here’s a simulation comparing the ensemble averages and population means at time points

The parameters are the same as for the preceding figures,

and the sample size is relatively small (

I = 20

T = 50

ymin = -0.5

ymax = 1.15

ar = LinearStateSpace(A_2, C_2, G_2, mu_0=np.ones(4))

fig, ax = plt.subplots()

ensemble_mean = np.zeros(T)

for i in range(I):

x, y = ar.simulate(ts_length=T)

y = y.flatten()

ax.plot(y, 'c-', lw=0.8, alpha=0.5)

ensemble_mean = ensemble_mean + y

ensemble_mean = ensemble_mean / I

ax.plot(ensemble_mean, color='b', lw=2, alpha=0.8, label='$\\bar y_t$')

m = ar.moment_sequence()

population_means = []

for t in range(T):

μ_x, μ_y, Σ_x, Σ_y = next(m)

population_means.append(float(μ_y.item()))

ax.plot(population_means, color='g', lw=2, alpha=0.8, label=r'$G\mu_t$')

ax.set_ylim(ymin, ymax)

ax.set_xlabel('time', fontsize=12)

ax.set_ylabel('$y_t$', fontsize=12)

ax.legend(ncol=2)

plt.show()

The ensemble mean for

The limit

(By long-run average we mean the average for an infinite (

Another application of the law of large numbers assures us that

21.3.4. Joint Distributions#

In the preceding discussion, we looked at the distributions of

This gives us useful information but doesn’t allow us to answer questions like

what’s the probability that

what’s the probability that the process

etc., etc.

Such questions concern the joint distributions of these sequences.

To compute the joint distribution of

From this rule we get

The Markov property

The marginal

In view of (21.1), the conditional densities are

21.3.4.1. Autocovariance Functions#

An important object related to the joint distribution is the autocovariance function

Elementary calculations show that

Notice that

21.4. Stationarity and Ergodicity#

Stationarity and ergodicity are two properties that, when they hold, greatly aid analysis of linear state space models.

Let’s start with the intuition.

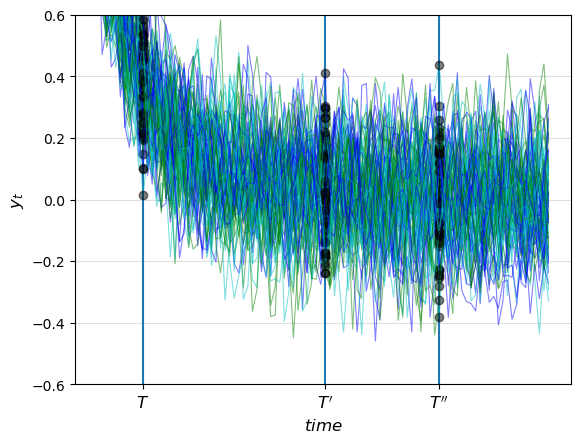

21.4.1. Visualizing Stability#

Let’s look at some more time series from the same model that we analyzed above.

This picture shows cross-sectional distributions for

def cross_plot(A,

C,

G,

steady_state='False',

T0 = 10,

T1 = 50,

T2 = 75,

T4 = 100):

ar = LinearStateSpace(A, C, G, mu_0=np.ones(4))

if steady_state == 'True':

μ_x, μ_y, Σ_x, Σ_y, Σ_yx = ar.stationary_distributions()

ar_state = LinearStateSpace(A, C, G, mu_0=μ_x, Sigma_0=Σ_x)

ymin, ymax = -0.6, 0.6

fig, ax = plt.subplots()

ax.grid(alpha=0.4)

ax.set_ylim(ymin, ymax)

ax.set_ylabel('$y_t$', fontsize=12)

ax.set_xlabel('$time$', fontsize=12)

ax.vlines((T0, T1, T2), -1.5, 1.5)

ax.set_xticks((T0, T1, T2))

ax.set_xticklabels(("$T$", "$T'$", "$T''$"), fontsize=12)

for i in range(80):

rcolor = random.choice(('c', 'g', 'b'))

if steady_state == 'True':

x, y = ar_state.simulate(ts_length=T4)

else:

x, y = ar.simulate(ts_length=T4)

y = y.flatten()

ax.plot(y, color=rcolor, lw=0.8, alpha=0.5)

ax.plot((T0, T1, T2), (y[T0], y[T1], y[T2],), 'ko', alpha=0.5)

plt.show()

cross_plot(A_2, C_2, G_2)

Note how the time series “settle down” in the sense that the distributions at

Apparently, the distributions of

When such a distribution exists it is called a stationary distribution.

21.4.2. Stationary Distributions#

In our setting, a distribution

Since

in the present case, all distributions are Gaussian

a Gaussian distribution is pinned down by its mean and variance-covariance matrix

we can restate the definition as follows:

where

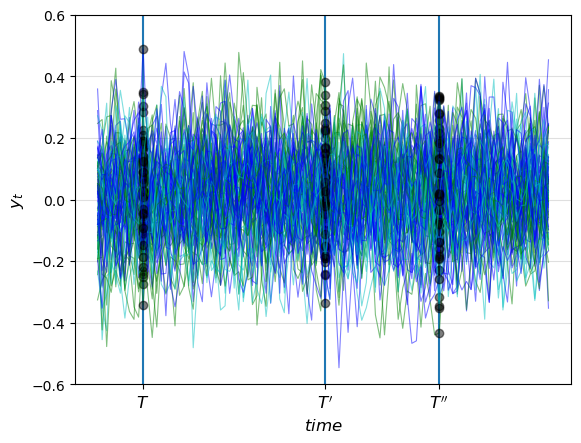

21.4.3. Covariance Stationary Processes#

Let’s see what happens to the preceding figure if we start

cross_plot(A_2, C_2, G_2, steady_state='True')

Now the differences in the observed distributions at

By

we’ve ensured that

Moreover, in view of (21.14), the autocovariance function takes the form

This motivates the following definition.

A process

both

In our setting,

21.4.4. Conditions for Stationarity#

21.4.4.1. The Globally Stable Case#

The difference equation

That is, if (np.absolute(np.linalg.eigvals(A)) < 1).all() == True.

The difference equation (21.7) also has a unique fixed point in this case, and, moreover

regardless of the initial conditions

This is the globally stable case — see these notes for more a theoretical treatment.

However, global stability is more than we need for stationary solutions, and often more than we want.

To illustrate, consider our second order difference equation example.

Here the state is

Because of the constant first component in the state vector, we will never have

How can we find stationary solutions that respect a constant state component?

21.4.4.2. Processes with a Constant State Component#

To investigate such a process, suppose that

where

Let

It follows that

Let

Assume now that the moduli of the eigenvalues of

Then (21.15) has a unique stationary solution, namely,

The stationary value of

The stationary values of

Notice that here

In conclusion, if

the moduli of the eigenvalues of

then the

Note

If the eigenvalues of

21.4.5. Ergodicity#

Let’s suppose that we’re working with a covariance stationary process.

In this case, we know that the ensemble mean will converge to

21.4.5.1. Averages over Time#

Ensemble averages across simulations are interesting theoretically, but in real life, we usually observe only a single realization

So now let’s take a single realization and form the time-series averages

Do these time series averages converge to something interpretable in terms of our basic state-space representation?

The answer depends on something called ergodicity.

Ergodicity is the property that time series and ensemble averages coincide.

More formally, ergodicity implies that time series sample averages converge to their expectation under the stationary distribution.

In particular,

In our linear Gaussian setting, any covariance stationary process is also ergodic.

21.5. Noisy Observations#

In some settings, the observation equation

Often this error term represents the idea that the true state can only be observed imperfectly.

To include an error term in the observation we introduce

An IID sequence of

A

and extend the linear state-space system to

The sequence

The process

The unconditional moments of

The variance-covariance matrix of

The distribution of

21.6. Prediction#

The theory of prediction for linear state space systems is elegant and simple.

21.6.1. Forecasting Formulas – Conditional Means#

The natural way to predict variables is to use conditional distributions.

For example, the optimal forecast of

The right-hand side follows from

That

The one-step-ahead forecast error is

The covariance matrix of the forecast error is

More generally, we’d like to compute the

With a bit of algebra, we obtain

In view of the IID property, current and past state values provide no information about future values of the shock.

Hence

It now follows from linearity of expectations that the

The

21.6.2. Covariance of Prediction Errors#

It is useful to obtain the covariance matrix of the vector of

Evidently,

Under particular conditions,

Equation (21.23) is an example of a discrete Lyapunov equation in the covariance matrix

A sufficient condition for

Weaker sufficient conditions for convergence associate eigenvalues equaling or exceeding one in modulus with elements of

21.7. Code#

Our preceding simulations and calculations are based on code in the file lss.py from the QuantEcon.py package.

The code implements a class for handling linear state space models (simulations, calculating moments, etc.).

One Python construct you might not be familiar with is the use of a generator function in the method moment_sequence().

Go back and read the relevant documentation if you’ve forgotten how generator functions work.

Examples of usage are given in the solutions to the exercises.

21.8. Exercises#

Exercise 21.1

In several contexts, we want to compute forecasts of geometric sums of future random variables governed by the linear state-space system (21.1).

We want the following objects

Forecast of a geometric sum of future

Forecast of a geometric sum of future

These objects are important components of some famous and interesting dynamic models.

For example,

if

if

Show that:

and

what must the modulus for every eigenvalue of

Solution to Exercise 21.1

Suppose that every eigenvalue of

It then follows that

This leads to our formulas:

Forecast of a geometric sum of future

Forecast of a geometric sum of future